Updated parameters are returned by updateParameters() function. To compute backpropagation, we write a function that takes as arguments an input matrix X, the train labels y, the output activations from the forward pass as cache, and a list of layer_sizes. More specifically, we have the following: Our neural net has only one hidden layer.

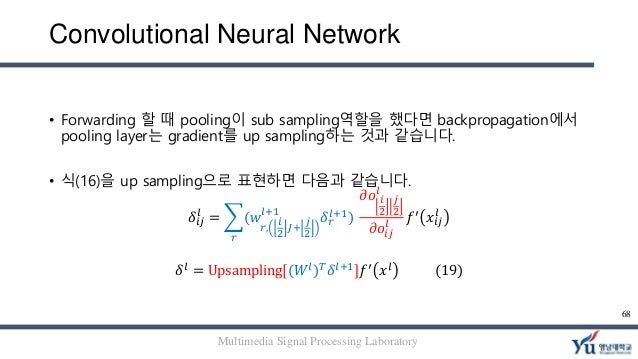

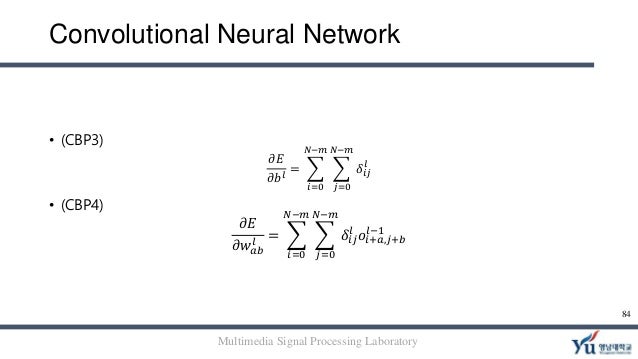

During backpropagation (red boxes), we use the output cached during forward propagation (purple boxes). Generally, in a deep network, we have something like the following. We’ll write a function that will calculate the gradient of the loss function with respect to the parameters. To calculate those 3 loss gradients, we first need to derive 3 more results: the gradients of totals against weights, biases, and input.Now comes the best part of this all: backpropagation! This is the return gradient we talked about in the Training Overview section! It will receive the gradient of loss with respect to its outputs ( ∂ L ∂ out \frac ∂ i n p u t ∂ L , from our backprop() method so the next layer can use it. During the backward phase, each layer will receive a gradient and also return a gradient.This means that any backward phase must be preceded by a corresponding forward phase. During the forward phase, each layer will cache any data (like inputs, intermediate values, etc) it’ll need for the backward phase.There are also two major implementation-specific ideas we’ll use: We’ll follow this pattern to train our CNN. A backward phase, where gradients are backpropagated (backprop) and weights are updated.A forward phase, where the input is passed completely through the network.Training a neural network typically consists of two phases: Obviously, we’d like to do better than 10% accuracy… let’s teach this CNN a lesson. Past 100 steps: Average Loss 2.302 | Accuracy: 12% Past 100 steps: Average Loss 2.302 | Accuracy: 3% Past 100 steps: Average Loss 2.302 | Accuracy: 8% Past 100 steps: Average Loss 2.302 | Accuracy: 11% Each class implemented a forward() method that we used to build the forward pass of the CNN:

We’d written 3 classes, one for each layer: Conv3x3, MaxPool, and Softmax. Our CNN takes a 28x28 grayscale MNIST image and outputs 10 probabilities, 1 for each digit. Our (simple) CNN consisted of a Conv layer, a Max Pooling layer, and a Softmax layer. We were using a CNN to tackle the MNIST handwritten digit classification problem: We’ll pick back up where Part 1 of this series left off. We’ll incrementally write code as we derive results, and even a surface-level understanding can be helpful.īuckle up! Time to get into it. You can skip those sections if you want, but I recommend reading them even if you don’t understand everything. Parts of this post also assume a basic knowledge of multivariable calculus. If you’re here because you’ve already read Part 1, welcome back! My introduction to CNNs (Part 1 of this series) covers everything you need to know, so I’d highly recommend reading that first. This post assumes a basic knowledge of CNNs. In this post, we’re going to do a deep-dive on something most introductions to Convolutional Neural Networks (CNNs) lack: how to train a CNN, including deriving gradients, implementing backprop from scratch (using only numpy), and ultimately building a full training pipeline!

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed